26. June 2024

Genetic algorithms

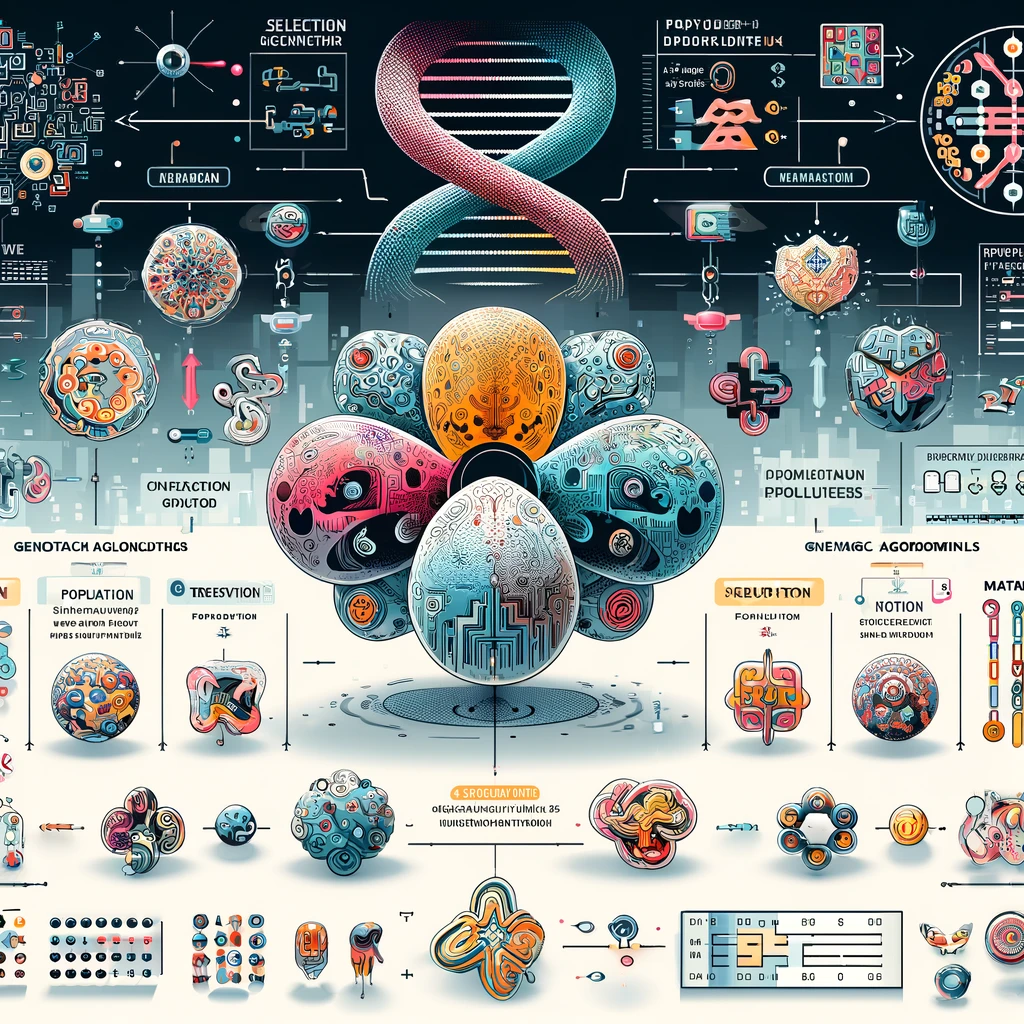

Cutting-edge metaheuristic combining selection, crossover and mutation to achieve optimal solutions

Introduction

Genetic algorithms are a class of optimization algorithms inspired by the principles of natural selection and genetics. They are widely used for solving complex optimization problems where traditional methods may struggle.

What are Genetic Algorithms?

Genetic Algorithms are search heuristics that mimic the process of natural evolution. They operate on a population of potential solutions, applying genetic operators such as selection, crossover, and mutation to evolve solutions towards optimality. Genetic algorithms are particularly effective for problems with large, complex search spaces.

The Concept Behind Genetic Algorithms

The fundamental idea behind genetic algorithms is to evolve a population of candidate solutions over successive generations. Each candidate solution is encoded as a chromosome, and the fitness of each solution is evaluated using a fitness function. The algorithm iteratively applies genetic operators to create new generations, selecting the fittest individuals for reproduction.

Mathematically, the process of a genetic algorithms can be described as:

\[ \text{Population}(t+1) = \text{Selection}(\text{Crossover}(\text{Mutation}(\text{Population}(t)))) \]

where \( t \) denotes the generation number.

Methodology

The methodology of genetic algorithms involves several key steps:

Initialization

Generate an initial population of candidate solutions randomly or using heuristic methods.

Selection

Evaluate the fitness of each candidate solution and select individuals for reproduction based on their fitness. Common selection methods include roulette wheel selection, tournament selection, and rank-based selection.

Crossover or Recombination

Combine pairs of parent solutions to produce offspring. This operator promotes the exchange of genetic material and exploration of new areas in the search space. Common crossover methods include single-point, two-point, and uniform crossover.

Mutation

Introduce random changes to individual solutions to maintain genetic diversity and avoid premature convergence. Common mutation methods include bit-flip mutation for binary encodings and Gaussian mutation for real-valued encodings.

Replacement

Form the next generation by replacing some or all of the population with new offspring. Strategies include generational replacement and steady-state replacement.

Termination

Repeat the selection, crossover, and mutation steps until a termination condition is met, such as a maximum number of generations or a satisfactory fitness level.

Technical Details

✔ Representation

Chromosomes

Candidate solutions, or chromosomes, can be represented in various ways depending on the problem domain. Common representations include binary strings, real-valued vectors, and permutations.

Fitness Function

The fitness function evaluates how well each candidate solution solves the problem. It assigns a fitness score to each individual, guiding the selection process. The design of the fitness function is crucial for the effectiveness of the genetic algorithms.

✔ Mathematical Formulation

Let \( \mathbf{P}(t) \) be the population at generation \( t \), and let \( f(\mathbf{x}) \) be the fitness function for a solution \( \mathbf{x} \).

The selection operator \( \text{Select}(\mathbf{P}(t)) \) selects individuals based on their fitness:

\[ \mathbf{P}_{\text{selected}}(t) = \text{Select}(\mathbf{P}(t), f) \]

The crossover operator \( \text{Crossover}(\mathbf{P}_{\text{selected}}(t)) \) generates offspring:

\[ \mathbf{P}_{\text{crossover}}(t) = \text{Crossover}(\mathbf{P}_{\text{selected}}(t)) \]

The mutation operator \( \text{Mutate}(\mathbf{P}_{\text{crossover}}(t)) \) introduces random variations:

\[ \mathbf{P}_{\text{mutated}}(t) = \text{Mutate}(\mathbf{P}_{\text{crossover}}(t)) \]

The new population \( \mathbf{P}(t+1) \) is formed by replacing some or all of the old population with the new offspring:

\[ \mathbf{P}(t+1) = \text{Replace}(\mathbf{P}(t), \mathbf{P}_{\text{mutated}}(t)) \]

✔ Training

Objective Function

The objective function measures the quality of solutions, guiding the search process. It is problem-specific and must be carefully designed to reflect the desired outcomes.

Optimization

GAs use a population-based approach to search for optimal solutions. The optimization process involves iteratively applying genetic operators to evolve the population towards better solutions. The process can be described as follows:

Initialize the population randomly. Repeat until termination condition is met: Evaluate the fitness of each individual. Select individuals based on their fitness. Apply crossover to produce offspring. Apply mutation to introduce variations. Form the new population by replacing some or all individuals.

Advantages and Limitations

✔ Advantages

Global Search Capability

GAs are capable of performing a global search, reducing the likelihood of getting trapped in local optima.

Flexibility

GAs can be applied to a wide range of optimization problems, including those with complex, non-linear, and multi-modal search spaces.

✔ Limitations

Computational Cost

GAs can be computationally expensive, especially for large populations and complex fitness evaluations. They may require significant computational resources and time to converge to an optimal solution.

Parameter Sensitivity

The performance of GAs is sensitive to the choice of parameters, such as population size, crossover rate, and mutation rate. Fine-tuning these parameters can be challenging and problem-specific.

References

- (Article) Genetic Algorithm: Review and Application, Manoj Kumar, Mohammad Husian, Naveen Upreti & Deepti Gupta | Website