09. June 2024

Simulated annealing algorithms

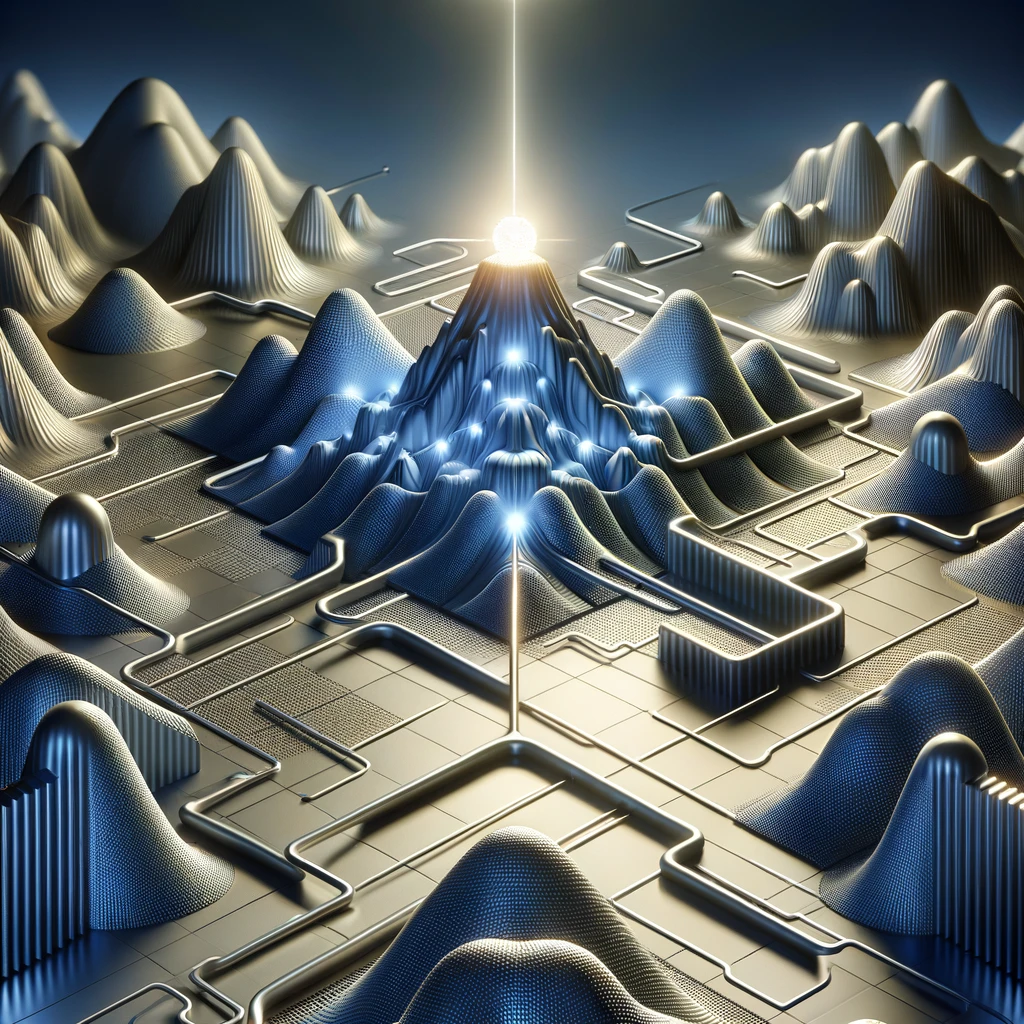

Metallurgy-based heuristic combining local and global optimization

Introduction

Simulated annealing is a probabilistic optimization technique inspired by the annealing process in metallurgy. This method is widely used for finding an approximate solution to complex optimization problems, particularly when the search space is large and contains numerous local optima. This technique was introduced by Kirkpatrick in 1983 to solve the Travelling Salesman Problem (TSP).

Principles of Simulated Annealing

Simulated annealing is based on the physical process of heating a material and then slowly cooling it to remove defects, minimizing the system's energy. This analogy is applied to optimization problems where the objective is to find a global minimum of a cost function. The key principles include:

Thermal Equilibrium

At each temperature, the system explores different states to reach a state of thermal equilibrium. At high temperatures, a poorer solution is more bound to be accepted and this probability decrease with the temperature value.

Gradual Cooling

The temperature is gradually reduced, allowing the system to transition from high-energy states to low-energy states, thus reducing the cost function.

The main advantage of SA is its ability to escape from local minima and converge to a global minimum.

Methodology

The simulated annealing process starts with an initial solution and then iteratively improves the current solution by randomly perturbing it and accepting the perturbation with a certain probability. The probability of accepting a worse solution is initially high and gradually decreases as the number of iterations increases.

The simulated annealing algorithm can be broken down into several key steps:

Initial Solution

Start with an initial solution \(x_0\) and an initial temperature \(T_0\).

Neighbourhood Search

Generate a neighbouring solution \(x'\) from the current solution \(x\).

Acceptance Criterion

Assume \(f(x)\) is the function returning the score evaluation of the solution \(x\), the lower the score is the better the evaluation is for the rest of the explanation. Decide whether to move to the new solution based on the criterion:

If \(f(x') < f(x)\), accept the new solution.

If \(f(x') \ge f(x)\), accept the new solution with a probability \(P\):

\(P = \exp(-(f(x') - f(x)) / T)\)

Temperature Update:

Reduce the temperature according to a cooling schedule. The function used can be customized to adapt the exploration-exploitation tradeoff.

Termination:

Repeat steps 2-4 until the system reaches a predefined stopping criterion, such as a minimum temperature or a maximum number of iterations.

Technical Details

✔ Cooling Schedule

The cooling schedule is crucial in simulated annealing. It determines how the temperature decreases over time. Common cooling schedules include:

Exponential

\(T = T \cdot \exp(-T / \tau)\), where \(\tau\) is a constant.

Sinusoidal

\(T = T \cdot \exp(-T / \tau) + i \cdot \sin(2\pi \cdot j \cdot k)\), where \(\tau\), \(i\), and \(j\) are constants, \(k\) is the iteration number.

Exponential Decay

\(T = T \cdot \alpha\), where \(\alpha\) is a constant less than 1.

Linear Decay

\(T = T - \beta\), where \(\beta\) is a constant.

Logarithmic Decay

\(T = T / \log(1 + k)\), where \(k\) is the iteration number.

✔ Raising Peaks during the Temperature Decrease

An interesting feature during the cooling scheduling is obtained by raising the temperature near from the initial value to improve the escaping of local optima. Here is an example of raising peaks using exponential and sinusoidal cooling schedule:

✔ Neighbourhood Function

The neighbourhood function defines how to generate a new solution from the current solution. This function should ensure that the entire search space is reachable from any starting point. Common strategies include:

Random Perturbation

Add a random value to the current solution.

Swap Mutation:

Swap two elements in the current solution, useful in combinatorial problems.

✔ Acceptance Probability

The acceptance probability allows the algorithm to escape local optima by accepting worse solutions with a probability that decreases over time. This probability is calculated using the criterion:

\(P = \exp(-(f(x') - f(x)) / T)\)

Where \(f(x)\) is the cost function, \(x\) is the current solution, \(x'\) is the new solution, and \(T\) is the current temperature. It is based on the Boltzmann probability distribution.

Advantages and Limitations

✔ Advantages

Global Optimization

Capable of escaping local optima to find a global optimum.

Simplicity

Easy to implement and requires few parameters.

Versatility

Applicable to a wide range of optimization problems.

✔ Limitations

Parameter Sensitivity

Performance depends on the cooling schedule and initial parameters.

Computational Cost

Can be slow for large problems due to the need for many iterations.

No Guarantee

Does not guarantee finding the absolute global optimum, only an approximation.